Most large online stores did not begin with sophisticated infrastructure. They started lean, often on shared hosting, validating products, testing demand, and learning their market. At that stage, shared hosting made sense: it was affordable, easy to manage, and sufficient for low to moderate traffic.

But e-commerce success has a way of exposing infrastructure limits very quickly. As traffic grows, catalogs expand, and transactions become constant, shared hosting stops being a cost-saving choice and starts becoming a business risk. This is why nearly every serious, high-revenue online store eventually abandons shared hosting—not because it failed outright, but because it can no longer keep up with the realities of scale.

Shared Hosting Works Until Performance Starts Affecting Revenue

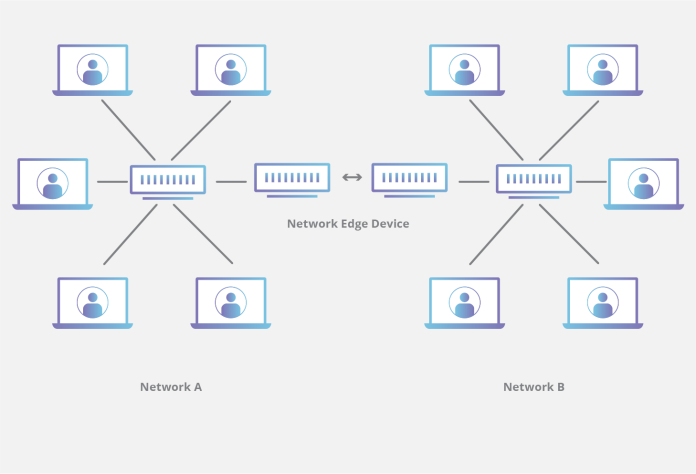

Shared hosting environments are designed for efficiency, not intensity. Multiple websites share the same server resources, including CPU, memory, storage, and network bandwidth. When traffic is light and workloads are predictable, this model performs adequately.

Large online stores operate under very different conditions. Product searches, dynamic pricing, inventory checks, personalized recommendations, and real-time checkout processes place continuous demand on server resources. In shared environments, these demands compete with unrelated websites hosted on the same machine. The result is performance variability—pages load slower at peak times, background processes lag, and checkout flows become inconsistent.

In e-commerce, performance degradation translates directly into lost revenue. Customers do not wait for slow product pages or delayed payment confirmations. They leave. At scale, even small performance dips compound into significant financial loss, turning shared hosting from a budget-friendly option into a silent drain on sales.

Traffic Spikes Reveal the Weakest Links

One of the defining characteristics of e-commerce growth is uneven traffic. Marketing campaigns, seasonal sales, influencer promotions, and flash deals can generate sudden surges in visitors. While shared hosting can handle steady, moderate loads, it struggles under sharp spikes.

When traffic increases rapidly, shared environments often respond by throttling resources to maintain overall server stability. This protects the hosting provider but harms the individual store. Pages slow down, carts time out, and payment gateways fail to respond quickly enough. The very moments designed to drive growth instead expose infrastructure fragility.

Large online stores learn quickly that peak traffic, not average traffic, defines infrastructure requirements. Shared hosting is optimized for averages, not extremes, making it unsuitable for brands that rely on promotional momentum and high-volume campaigns.

Checkout Reliability Becomes Non-Negotiable

As order volumes increase, the checkout process becomes the most critical and sensitive component of the platform. Payment authorization, fraud checks, inventory updates, and order confirmations must execute reliably and in sequence. Any delay or failure introduces errors that affect both customers and internal operations.

Shared hosting environments offer limited control over how resources are allocated during these moments. Background activity from other tenants can interfere with time-sensitive processes, increasing the likelihood of failed transactions or duplicated orders. For large stores, these issues generate support tickets, refunds, and reputational damage that far outweigh the cost savings of shared infrastructure.

Dedicated environments allow checkout workflows to be isolated, prioritized, and optimized, ensuring that payment-related processes are never disrupted by unrelated workloads.

Security and Compliance Pressures Increase with Scale

Growth brings scrutiny. Large online stores handle more customer data, process higher payment volumes, and attract greater attention from malicious actors. Security expectations rise accordingly, both from customers and from payment providers.

Shared hosting introduces inherent limitations in isolation. While reputable providers implement safeguards, the presence of multiple tenants on the same system expands the attack surface. For stores subject to compliance requirements such as PCI-DSS, shared environments can complicate audits and increase remediation costs.

As brands mature, they seek infrastructure models that simplify security architecture and compliance management. Dedicated hosting offers clearer boundaries, stronger isolation, and greater visibility into system behavior—qualities that become increasingly valuable as transaction volumes and regulatory obligations grow.

Customization and Optimization Become Strategic Needs

Early-stage stores rely on standard configurations and off-the-shelf platforms. Large online stores, by contrast, require customization. Advanced search capabilities, real-time inventory syncing, personalized user experiences, and complex integrations demand infrastructure that can be tuned precisely.

Shared hosting limits this flexibility. Restrictions on server configuration, software versions, and performance tuning create friction for development teams. Innovation slows as teams work around platform constraints rather than building features.

Dedicated servers remove these limitations, allowing stores to optimize databases, caching layers, and application stacks according to their specific workload. This control supports faster iteration, better performance, and more sophisticated customer experiences.

Predictability Replaces Convenience

At scale, unpredictability is the enemy. Shared hosting introduces variables that are difficult to control, from neighboring traffic patterns to provider-level resource management policies. For large stores, this unpredictability complicates planning and increases operational stress.

Dedicated infrastructure offers predictability. Resources are reserved, performance is consistent, and capacity planning becomes straightforward. Finance teams can forecast infrastructure costs accurately, while operations teams gain confidence that systems will behave reliably during critical periods.

This shift from convenience to predictability reflects a broader maturation process. Infrastructure evolves from a supporting tool into a strategic asset.

Why Large Brands Rarely Go Back

Once online stores migrate away from shared hosting, they rarely return. The benefits of dedicated environments—stability, control, security, and scalability—become embedded in daily operations. Infrastructure stops being a bottleneck and starts enabling growth rather than limiting it.

This is why many established e-commerce brands partner with providers such as Atlantic.Net, whose infrastructure is designed to support high-traffic, transaction-heavy platforms without the compromises inherent in shared environments.

Conclusion

Shared hosting plays an important role in the early stages of e-commerce, but it is not designed for sustained growth, high transaction volumes, or operational maturity. As online stores scale, the demands placed on infrastructure change fundamentally. Performance becomes revenue-critical, reliability becomes trust-critical, and security becomes non-negotiable.

Large online stores abandon shared hosting not because it fails completely, but because success exposes its limits. Dedicated infrastructure represents a deliberate move toward stability, control, and long-term resilience—qualities that serious e-commerce brands require to compete and grow in an increasingly demanding digital marketplace.